Overview

Red Label Vacations, Canada’s largest independent online travel company, had a problem. For five years running, their flagship website, RedTag.ca, suffered brownouts for up to an hour per day during peak months. Rob Gennaro, Digital Marketing Officer at Red Label Vacations, watched as visitors became frustrated when the site slowed, became unavailable, or were unable to make queries and complete transactions.

Gennaro checked his logs and saw the problem clearly: web scraping bots were hitting the database hard — creating bottlenecks — and their homegrown solution for blocking IP addresses wasn’t working. “Bots were coming in through proxies, and IP addresses were being cycled as fast as we could block them,” said Gennaro.

Like many online travel sites, Red Label Vacations lacked insight and control over web scrapers, and were powerless to block the bots and keep the site up for legitimate visitors. After five years of frustration, not to mention significant lost revenues during peak booking periods, Gennaro had had enough.

The Challenges

Complex web infrastructure required operational flexibility

Red Label Vacations’ complex web infrastructure included 5 servers for RedTag.ca, 5 servers for ITA (for flight technology), and an Akamai CDN. Their 19 web properties were spread across owned and outsourced data centers as well as a variety of web application stacks (e.g., .NET, PHP). Any solution for bot detection and mitigation would need to easily integrate across this complex environment while maintaining a light footprint.

RedTag.ca utilizes API calls into 3rd party systems such as ITA, Sabre, Softvoyage, Hotels.com and Cartrawler. Bots were executing search queries and significantly driving up the costs for API calls.

Number one priority: human visitors booking travel

The primary concern for online travel sites is human visitor interaction. “I wanted a solution that could block malicious bots without impacting legitimate traffic,” said Gennaro. “And I wanted a partner I could rely on.”

His key requirements for bot detection included:

- The ability to block web scraping bots without impacting human visitors

- A method to accurately identify good bots, bad bots and humans

- Increase website availability and speed

- No changes to underlying web infrastructure

The Results

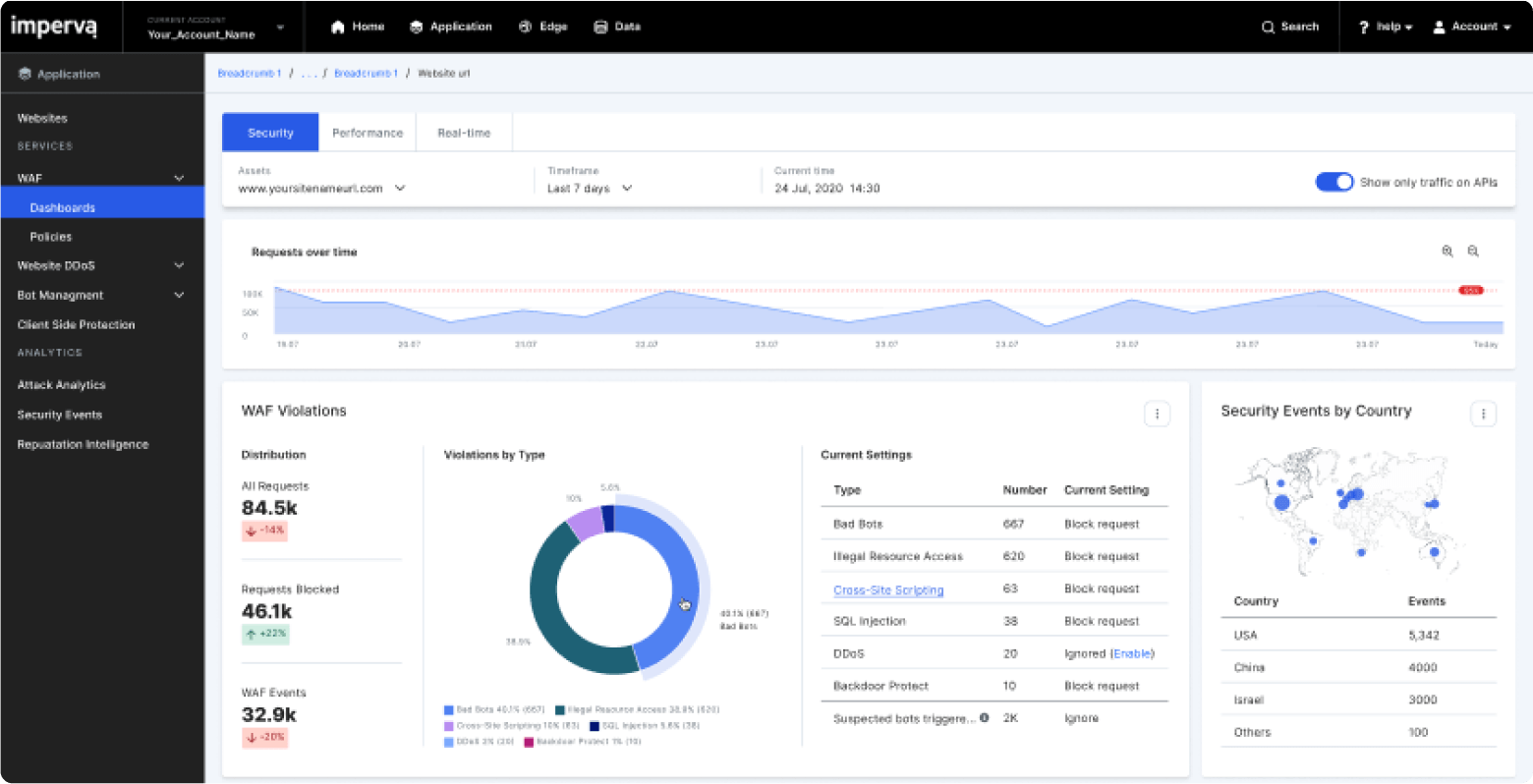

Red Label Vacations implements Imperva formerly (Distil Networks) in the Cloud.

Gennaro set up a free trial with Imperva and the full implementation followed within weeks. Red Label Vacations was delighted with the ease of setup and accuracy of the solution. “Imperva delivered on all of our key requirements,” said Gennaro. After optimizing their settings, Red Label Vacations was able to stop the malicious web scrapers and saw immediate improvements in their website performance metrics, including:

- Increased uptime from 99.6% to 99.9% (no downtime for the first time in five years)

- Increased page load times (no errors reported to human visitors)

- Improved SEO and Google quality score

- Increased user time on site

- Decreased bounce rate

Red Label Vacations improves margins and reduces infrastructure costs

As a result of blocking the content thieves and improving website performance, Red Label Vacations realized several major financial wins:

- Increased revenue from more completed transactions during peaks

- Reduced cost and complexity of website infrastructure

- Reduced costs versus Akamai CDN by 65%

- Reduced costs for third party API calls

- Eliminated tax on internal IT teams

Rob Gennaro no longer has to worry about web scrapers on RedTag.ca. Imperva’s self-optimizing protection solution and its Known Violators database means that he was able “to set it and forget it.” Moving on to another Red Label Vacations property (Sunquest.ca,) which also suffered from heavy web scraping, Gennaro didn’t hesitate to implement Imperva’s service. The results have been nothing short of amazing.

Watch the replay or check out the slides of our recent webinar featuring Rob Gennaro of Red Label Vacations, Rami Essaid, CEO of Imperva and Kevin May, Editor of Tnooz as they discuss How to Clean Up Website Traffic from Bots & Spammers.