2024 Bad Bot Report Explore the latest trends & impact of unmanaged bot traffic

Get the reportComprehensive digital security

Imperva latest news

KuppingerCole Report: Why Your Organization Needs Data-Centric Security

Read more

Data Security in 2024: Unveiling Strategies Against AI-Driven Cyber Threats

Watch webinarThales & Imperva join forces to create a global leader in cybersecurity

Find out moreEnterprises move to Imperva for

world class security

Faster response

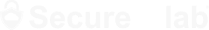

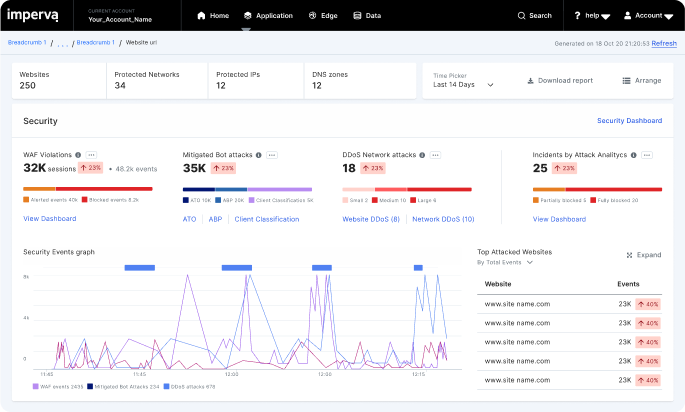

Accelerate containment with 3-second DDoS mitigation and same day blocking of zero-days.

Deeper protection

Secure applications and data deployed anywhere with positive security models.

Consolidated security

Consolidate security point products for detection, investigation, and management under one platform.

Imperva products are quite exceptional

IT Security and Risk Management

Government Industry

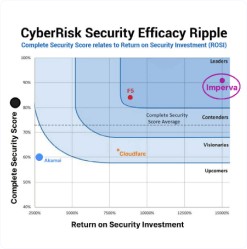

See why Imperva

Gartner® and Peer Insights™ are trademarks of Gartner, Inc. and/or its affiliates. All rights reserved. Gartner Peer Insights content consists of the opinions of individual end users based on their own experiences, and should not be construed as statements of fact, nor do they represent the views of Gartner or its affiliates. Gartner does not endorse any vendor, product or service depicted in this content nor makes any warranties, expressed or implied, with respect to this content, about its accuracy or completeness, including any warranties of merchantability or fitness for a particular purpose.